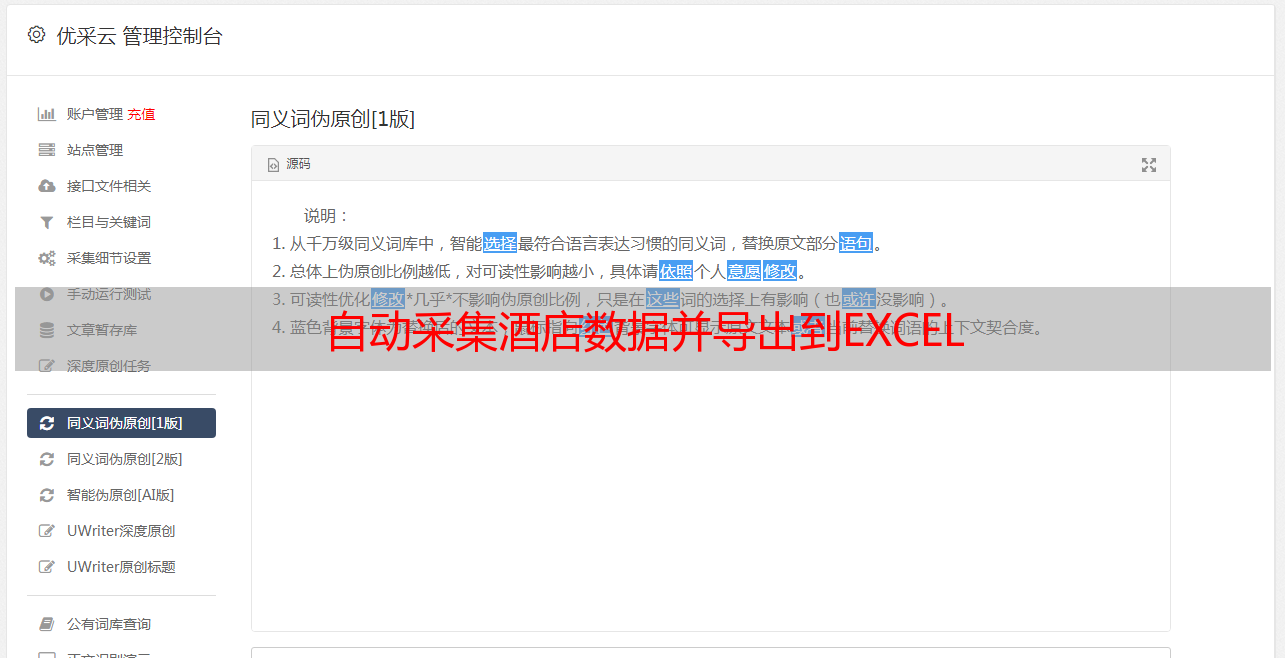

自动采集酒店数据并导出到EXCEL

优采云 发布时间: 2020-08-06 19:13客户需求

酒店后端管理系统记录合作酒店的信息. 共有300多页,每页10条数据,主要包括合作酒店的面积,价格,风险指数等信息. 现在,此信息需要分类到EXCEL中. 如果手动复制,则工作量很大且容易出错,因此客户端建议使用采集器来采集数据并将其导出到EXCEL.

爬行器模拟着陆

列表页面

详细信息页面

详细的设计过程

首先使用用户名和密码登录,然后打开第一页,然后打开每个记录的详细信息页,然后采集数据. 然后遍历第二页直到最后一页.

Scrapy

使用Python的Scrapy框架

1

pip install scrapy

核心源代码

成功登录后,将自动处理cookie,以便您可以正常访问该页面. 返回的数据格式为html,可通过xpath进行解析. 为了避免对服务器造成压力,请在晚上爬网数据,并在settings.py中设置5S的延迟.

1

DOWNLOAD_DELAY = 5

hotel.py的源代码如下:

1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39

40

41

42

43

44

45

46

47

48

49

50

51

52

53

54

55

56

57

58

59

60

61

62

63

64

65

66

67

68

69

70

71

72

73

74

75

76

77

78

79

80

81

82

83

84

85

86

87

88

89

90

91

92

93

94

95

96

97

98

99

100

101

102

103

104

105

106

107

108

109

110

111

112

113

114

115

116

117

118

119

120

121

122

123

124

125

126

127

128

129

130

131

132

133

134

135

136

137

138

139

140

141

142

143

144

145

146

147

148

149

150

151

152

153

154

155

156

157

158

159

160

161

162

163

164

165

166

167

import scrapy

from scrapy.selector import Selector

from scrapy.http import FormRequest

class HotelSpider(scrapy.Spider):

name = 'hotel'

allowed_domains = ['checkhrs.zzzdex.com']

login_url = 'http://checkhrs.zzzdex.com/piston/login'

url = 'http://checkhrs.zzzdex.com/piston/hotel'

start_urls = ['http://checkhrs.zzzdex.com/piston/hotel']

def login(self, response):

fd = {'username':'usernamexxx', 'password':'passwordxxx'}

yield FormRequest.from_response(response,

formdata = fd,

callback = self.parse_login)

def parse_login(self, response):

if 'usernamexxx' in response.text:

print("login success!")

yield from self.start_hotel_list()

else:

print("login fail!")

def start_requests(self):

yield scrapy.Request(self.login_url,

meta = {

},

encoding = 'utf8',

headers = {

'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36'

},

dont_filter = True,

callback = self.login)

def start_hotel_list(self):

yield scrapy.Request(self.url,

meta = {

},

encoding = 'utf8',

headers = {

'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36'

},

dont_filter = True)

def parse(self, response):

print("parse")

selector = Selector(response)

select_list = selector.xpath('//table//tbody//tr')

for sel in select_list:

address = sel.xpath('.//td[3]/text()').extract_first()

next_url = sel.xpath('.//td//a[text()="编辑"]/@href').extract_first()

next_url = response.urljoin(next_url)

print(next_url)

yield scrapy.Request(next_url,

meta = {

'address': address

},

encoding = 'utf8',

headers = {

'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36'

},

dont_filter = True,

callback = self.parseDetail)

totalStr = selector.xpath('//ul[@class="pagination"]//li[last()-1]//text()').extract_first();

print(totalStr)

pageStr = selector.xpath('//ul[@class="pagination"]//li[@class="active"]//text()').extract_first();

print(pageStr)

total = int(totalStr)

print(total)

page = int(pageStr)

print(page)

if page >= total:

print("last page")

else:

next_page = self.url + "?page=" + str(page + 1)

print("continue next page: " + next_page)

yield scrapy.Request(next_page,

meta = {

},

encoding = 'utf8',

headers = {

'Content-Type': 'application/x-www-form-urlencoded; charset=UTF-8',

'User-Agent': 'Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_3) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/80.0.3987.132 Safari/537.36'

},

dont_filter = True)

def parseDetail(self, response):

address = response.meta['address']

print("parseDetail address is " + address)

addresses = address.split('/')

print(addresses)

country = ""

province = ""

city = ""

address_len = len(addresses)

if address_len > 0:

country = addresses[0].strip()

if address_len > 1:

province = addresses[1].strip()

if address_len > 2:

city = addresses[2].strip()

selector = Selector(response)

hotelId = selector.xpath('//input[@id="id"]/@value').extract_first()

group_list = selector.xpath('//form//div[@class="form-group js_group"]')

hotelName = group_list[1].xpath('.//input[@id="hotel_name"]/@value').extract_first()

select_list = selector.xpath('//form//div[contains(@class,"company_container")]')

for sel in select_list:

company_list = sel.xpath('.//div[@class="panel-body"]//div[@class="form-group"]')

#公司

companyName = company_list[0].xpath('.//input[@id="company"]/@value').extract_first()

#单早

singlePrice = company_list[1].xpath('.//input/@value')[0].extract()

#双早

doublePrice = company_list[1].xpath('.//input/@value')[1].extract()

#风险指数

risk = company_list[2].xpath('.//input/@value').extract_first()

#备注

remark = company_list[3].xpath('.//input/@value').extract_first()

yield {

'ID': hotelId,

'国家': country,

'省份': province,

'城市': city,

'酒店名': hotelName,

'公司': companyName,

'单早': singlePrice,

'双早': doublePrice,

'风险指数': risk,

'备注': remark

}

运行

1

scrapy crawl hotel -o ./outputs/hotel.csv

大约在第二天中午,从晚上10点开始爬升,所有数据都被采集,没有错误,最终输出为CSV,按ID排序,然后转换为EXCEL.

摘要

在日常工作中使用履带技术可以提高工作效率并避免重复的任务.